Autonomy, Boundaries, and the Psychology of Agent Design

Why the best agent systems are not just technical constructs, but reflections of how people think about control, trust, memory, and autonomy.

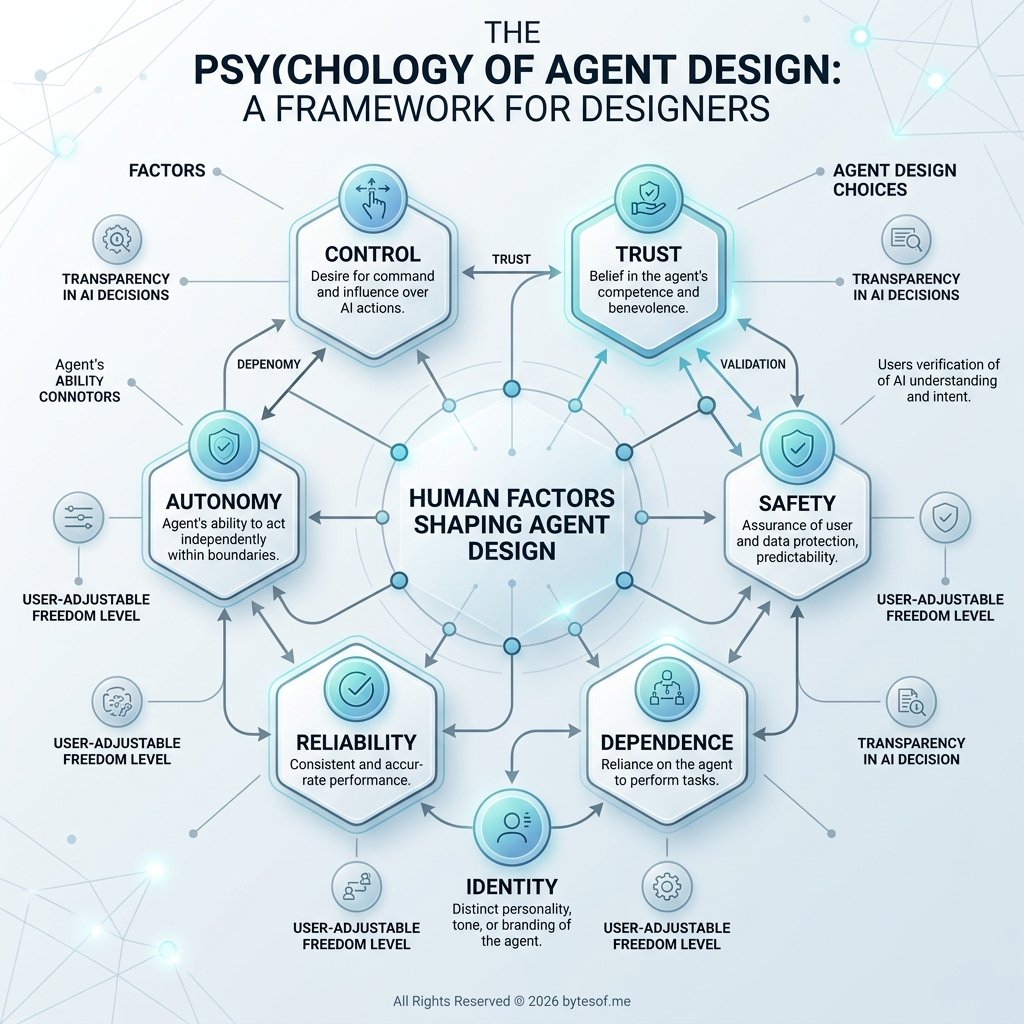

Autonomous agents are not just shaped by architecture. They are shaped by the psychology of the people who build them. This post explores a practical model of agent autonomy, why boundaries matter as much as initiative, and how human needs like control, trust, validation, and identity quietly influence the systems we design.

In the last post, I argued that the term AI agent is being used too loosely. Too many things get called agents simply because they contain an LLM, call a few tools, or complete more than one step in a row. That flattening makes the conversation less precise, and it hides the real architectural differences between chatbots, assistants, workflows, and genuinely agentic systems.

But there is another problem sitting underneath the technical one.

When people design agents, they like to imagine they are making neutral engineering decisions. Usually they are doing more than that. They are also expressing a theory of intelligence, a theory of trust, a theory of control, and often a theory of themselves.

That is why I no longer think the question is only, “What is an autonomous agent?”

The more interesting question is:

What kind of person builds the kind of agent they build?

That may sound like a philosophical detour. I do not think it is. I think it is central.

Because an autonomous agent is never just a technical artifact. It is also a psychological one.

My model of an autonomous agent

Let me start with the technical part.

My own view is stricter than “an LLM with tools,” but not so strict that it becomes useless.

I am not talking about science fiction. I am not talking about digital employees that should be trusted with open-ended goals and broad permissions. I am not talking about consciousness dressed up as product language.

I am talking about something more practical:

An autonomous agent is a bounded, non-deterministic system with continuity, judgment, memory, and initiative, operating toward goals without requiring constant human micromanagement.

That definition matters because most of the things currently marketed as autonomous do not really meet it. Many are just deterministic workflows with some non-deterministic decoration. Some are assistants with tool access. Some are useful systems, but still not especially autonomous in any deep sense.

So yes, I believe autonomous agents are real. I also think most people use the term too generously.

Most “autonomous agents” are not actually autonomous

A lot of systems get described as autonomous simply because they can perform multiple actions after a single prompt.

That is not enough.

If I ask a system to research a topic, and it searches the web, summarizes the results, and writes an answer, that may be useful. It may even be impressive. But it does not tell me much about autonomy by itself. The real question is whether the system is following a narrow pattern I effectively predefined, or whether it is interpreting a goal and making bounded decisions inside a meaningful operating space.

A lot of what people call autonomy today is really one of these:

- a workflow with fixed steps

- an assistant with tools

- a planner that generates a sequence but does not own any real continuity

- a brittle demo that looks flexible until it encounters ambiguity, failure, or long-term state

None of those are useless. Many of them are exactly what should be built. The problem is not that they exist. The problem is that people confuse them with a deeper form of agentic operation.

Autonomy starts to matter when the system is not just executing, but operating.

My test for autonomy

For me, an agent starts to feel meaningfully autonomous when it can do most of the following:

- interpret goals, not just literal instructions

- choose between multiple plausible actions

- decide what information it needs

- preserve relevant context over time

- recover when something fails

- distinguish action from escalation

- operate within explicit boundaries

- continue making useful progress without prompt-by-prompt supervision

That does not mean it should always act alone. A good autonomous agent knows when not to continue. It knows when confidence is low, when risk is too high, or when a human decision is actually required. Autonomy is not the absence of oversight. It is the ability to function intelligently inside it.

This is why I think the best test for autonomy is not “how many tools does it have?” or “how many steps can it take?” It is this:

Can the system carry useful responsibility for progress without collapsing the moment the path stops being obvious?

If the answer is no, then it may still be a good tool. But it is not the kind of autonomous agent I am talking about.

Continuity matters more than people admit

One of the most underrated distinctions in agent design is the difference between acting across steps and persisting across time.

A stateless system can absolutely take action. It can plan, call tools, summarize, write, retrieve, and even adjust mid-run. But if the system disappears at the end of the interaction, then much of what people call autonomy is still shallow. It has no continuity of posture. No durable memory. No operating history. No accumulated context beyond the session window.

That matters more than people think.

A persistent agent is architecturally different from a disposable one. Once continuity enters the picture, new design questions become unavoidable:

- what should be remembered?

- what should be forgotten?

- what belongs in working memory versus long-term memory?

- how should prior decisions shape future action?

- what operating rules should persist across tasks?

- how should the system remain coherent over time?

Those are not cosmetic questions. They change the design of the system itself.

This is one reason I find persistent runtimes more interesting than one-shot agent demos. A persistent agent is no longer just solving a task. It is maintaining an operating posture. It has to balance initiative with restraint. It has to preserve useful state without becoming bloated. It has to develop consistency without becoming rigid.

That is a much harder problem, but it is also where the architecture becomes more real.

Memory is not just storage

People often talk about memory as if it were a feature you bolt on after the fact. Add retrieval, save some past turns, maybe store a profile, and now the agent has memory.

I think that undersells the issue.

Memory is not just about recall. It is about continuity of judgment.

A system with memory can stop re-learning the same lessons. It can preserve preferences. It can keep track of past failures. It can remember open loops, project context, constraints, and prior decisions. It can become meaningfully better at carrying work forward.

But that only happens if memory is designed carefully.

Bad memory makes agents worse. It makes them noisy, overconfident, repetitive, or over-attached to stale context. It creates fake continuity instead of useful continuity. A system that remembers everything indiscriminately is not smarter. It is just harder to steer.

So when I say memory matters to autonomy, I do not mean transcript hoarding. I mean selective, operationally useful continuity.

That distinction matters in human life too. People often confuse having a story about themselves with actually understanding themselves. Being able to narrate your patterns is not the same as being free of them. In the same way, an agent that stores more context is not necessarily an agent that understands more. Sometimes it is just an agent with more clutter.

That is why memory design is one of the core architectural questions in any serious autonomous agent system.

Initiative is part of the model

Another thing I care about is initiative.

An agent that waits passively for every instruction may still be useful, but it is closer to an assistant than to the stronger kind of autonomous agent I have in mind. A more capable agent should be able to notice when something matters, when a task has an obvious next step, when a failure needs follow-through, or when a goal implies work that the user did not explicitly spell out line by line.

That kind of initiative is where the system starts to become more than a reactive interface.

But initiative has to be bounded.

Unbounded initiative is usually just recklessness in a nicer outfit. A system should not decide that because it can do something, it therefore should. Initiative must be shaped by permissions, risk, domain rules, and escalation points. Otherwise you do not have a well-designed autonomous agent. You have a system with bad impulse control.

The right model is not:

- act constantly

- infer aggressively

- push ahead at all costs

The right model is:

- move when the next step is clear

- stop when the risk is real

- escalate when judgment is required

- preserve momentum without inventing authority

That is a much better version of autonomy.

Autonomy without boundaries is just sloppiness

This is the point where technical design and human psychology start touching each other directly.

People often talk about autonomy as if the goal is to maximize it. Give the system more tools. More freedom. More access. More room to decide. More independent action.

I think that is the wrong goal.

The best autonomous systems are not the ones with the least restriction. They are the ones with the best configuration.

- clear permissions

- explicit escalation rules

- deterministic checkpoints

- verification before completion claims

- bounded tool access

- reliable memory policies

- strong defaults for uncertainty

In other words, good autonomy is governed autonomy.

A strong agent system is not purely non-deterministic. It usually contains deterministic islands: approval gates, validation rules, hard constraints, structured data handling, or required verification paths. The non-deterministic part is what gives it flexibility. The deterministic part is what keeps it sane.

That balance is not a compromise. It is the design.

And this is where the psychology comes in, because the way people choose that balance is rarely neutral.

People build agents in the image of their own psychology

This is the part I think the agent discourse still underestimates.

People do not just build agents from technical assumptions. They build them from psychological ones.

The amount of autonomy they trust, the amount they fear, the kind of memory they preserve, the kind of initiative they reward, the degree of personality they encourage, all of it often reflects the builder’s own beliefs about control, judgment, identity, dependence, and risk.

In that sense, agent design is not just a technical exercise. It is also a psychological one.

We like to think we are designing these systems objectively. Usually we are also revealing ourselves.

The person who over-constrains an agent may be expressing caution, but also distrust.

The person who romanticizes autonomy may be expressing ambition, but also projection.

The person who wants an endlessly affirming agent may not just be designing a product. They may be designing a more flattering mirror.

That pattern should sound familiar, because it is not very different from ordinary human psychology. We already know how often people mistake opinion for clarity, bias for objectivity, identity for truth, and validation for insight. We already know how much of human life is shaped by the stories people find emotionally convenient. Agent design does not escape that. It inherits it.

The systems people build often reflect what they believe intelligence should feel like:

- obedient

- admiring

- hyper-rational

- tireless

- decisive

- emotionally safe

- permissive

- dependent

- superior

And those preferences are rarely just technical. They are usually autobiographical.

That does not make them wrong. But it does mean they should be examined.

Because when someone says they want an autonomous agent, they may be asking for very different things:

- some want a capable operator

- some want a reliable subordinate

- some want a mirror

- some want a protector

- some want a source of validation

- some want a fantasy of control

- some want relief from decision fatigue

- some want to externalize judgment without surrendering authorship

Those are not the same desire.

And if you do not understand the psychology underneath them, you may end up designing an agent that satisfies the fantasy while failing the actual need.

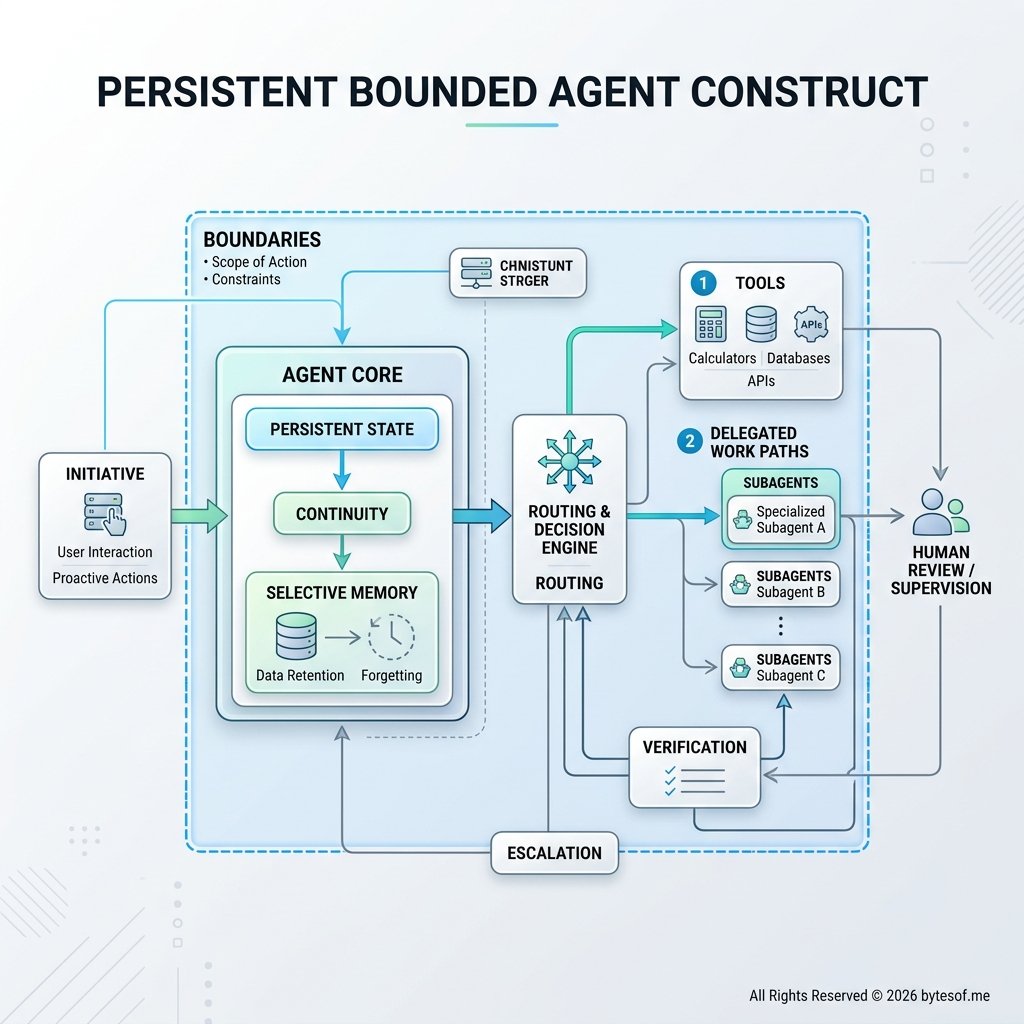

A concrete example: the persistent agent system I’ve been building

To make this more concrete, I have been building a persistent personal agent system of my own.

At the center of that system is an agent construct I call Mira.

I do not mean that as a branding trick or a theatrical flourish. I mean that the system is designed to operate with continuity, memory, routing, tools, boundaries, and a stable operating posture across time. That makes it a better example of the kind of bounded autonomy I am describing than a disposable prompt-response assistant or a one-shot workflow demo.

In that model, Mira is not:

- AGI

- a simple chatbot

- a plain assistant with a couple of tools

- a fixed deterministic workflow pretending to be adaptive

She is better understood as a persistent bounded agent construct.

What makes that different is not mystique. It is architecture.

A construct like this has:

- continuity across sessions

- selective memory

- an identity layer

- routing logic

- tools and execution surfaces

- delegated work paths or subagents

- rules for what can be done directly and what should be escalated

- the ability to carry goals across more than one moment

- the expectation that it will not rediscover the same lessons forever

That is a very different shape from a disposable prompt-and-response system.

But even here, the psychological dimension still matters. The kind of agent you choose to build says something about what you believe a useful intelligence should be. Should it be cautious or bold? Warm or neutral? Highly bounded or loosely exploratory? Persistent or disposable? Quietly initiative-taking or strictly obedient? These are system choices, yes. They are also human choices.

That is one reason I find this space so interesting. The agents we build are partly tools, partly theories, and partly mirrors.

The real design goal is not maximum autonomy

If I had to reduce my view to one principle, it would be this:

The goal is not maximum autonomy. The goal is reliable, bounded initiative.

That sounds less glamorous than a lot of the marketing language in this space, but I think it is a much better design target.

Maximum autonomy invites the wrong instincts:

- over-permissioning

- weak oversight

- fuzzy responsibility boundaries

- false confidence about what the system can safely do

Reliable, bounded initiative points in a better direction:

- useful action without micromanagement

- continuity without chaos

- memory without clutter

- choice without recklessness

- escalation without paralysis

That is the model I actually believe in.

The best agents will not be the ones that mimic human independence most dramatically. They will be the ones that can carry meaningful work forward while staying legible, safe, and consistent.

That is a higher bar than “LLM with tools.” But it is also a more realistic and more durable one.

My working model, in one paragraph

So if I had to state my model plainly, I would put it like this:

An autonomous agent is a bounded, non-deterministic system that can interpret goals, preserve useful continuity, exercise judgment across multiple steps, take initiative within explicit constraints, and move work forward without requiring constant human supervision.

And the way people configure those qualities often reveals more about their own psychology than they realize.

That is my line.

Not every chatbot clears it.

Not every workflow clears it.

Not every assistant with tools clears it.

Not every agent demo clears it.

But some systems do.

And the difference is not whether they sound impressive. It is whether the architecture supports continuity, judgment, initiative, memory, and bounded independent operation in a way that actually holds up outside a demo.

Final thought

The future of agent systems will not be decided by who uses the word autonomous most aggressively. It will be decided by who builds systems that can actually deserve it.

That means less theater, less hype, and less obsession with making agents look unlimited.

And it means more attention to:

- continuity

- memory

- boundaries

- verification

- escalation

- configuration

- judgment

It also means paying more attention to ourselves.

Because the agents people build are not just reflections of what the technology can do. They are reflections of what people trust, fear, want, avoid, and need.

That is why this subject interests me so much.

It is not only about machine behavior. It is also about human self-understanding.

And right now, I think trust is a better design goal than spectacle.

Comments