Why tool use is not agency, why workflows are not autonomy, and why deterministic systems should not be confused with agents.

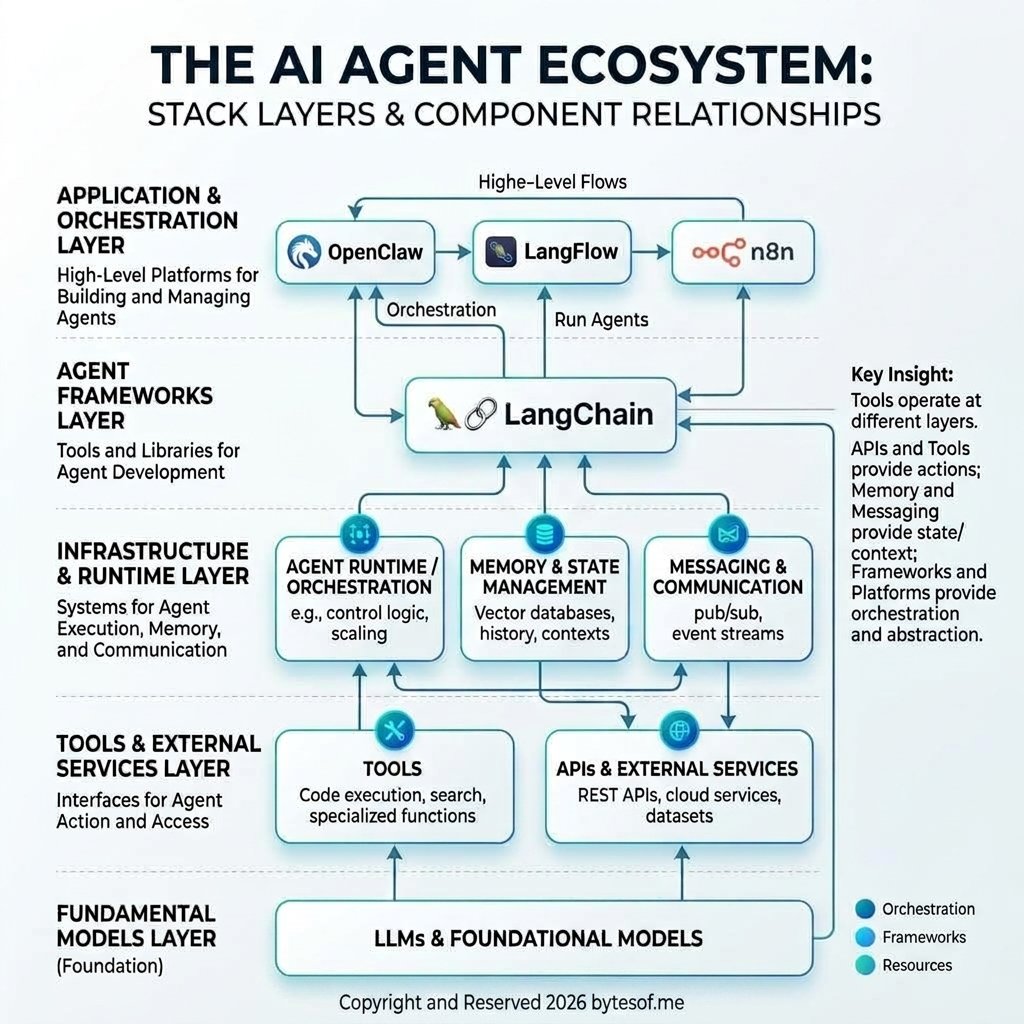

The term AI agent is being used far too loosely. A chatbot with tools, a LangFlow graph, an n8n automation, a LangChain tool loop, and a persistent runtime like OpenClaw are not all the same thing. This post offers a clearer definition of what an AI agent actually is, with a practical distinction between deterministic workflows and non-deterministic, bounded agent systems.

The term AI agent is starting to suffer the same fate as words like platform, AI-powered, and digital transformation. It gets used so broadly that it stops being useful.

Today, a lot of things get called agents:

- a chatbot with tool calling

- a LangChain chain with a few decision steps

- a LangFlow canvas that branches between API calls

- an n8n automation with an LLM in the middle

- a persistent system like OpenClaw that can route work, use tools, remember context, and operate across sessions

These are not all the same thing.

They may all use an LLM. They may all look impressive in a demo. But if we use the word agent for every one of them, we lose the ability to talk clearly about architecture, autonomy, reliability, and control.

So before building one, buying one, or arguing about one, it helps to ask a more boring but more important question:

What actually qualifies as an AI agent?

The problem is not the tools. It is the looseness.

The current tooling ecosystem makes the confusion worse.

If you use LangChain, you can build chains, retrieval workflows, tool loops, and agent patterns. If you use LangFlow, you can visually wire prompts, tools, branches, and memory together. If you use n8n, you can automate multi-step flows with LLM calls inside them. If you use OpenClaw, you can create a more operational system with tools, memory, routing, sessions, subagents, and persistent behavior over time.

All of these can be part of agent systems.

But none of them, by themselves, make something an agent.

That distinction matters because people often confuse tool use with agency, and they also confuse automation with autonomy.

A system that calls a weather API when asked for the weather is not interestingly agentic. It is tool-enabled. A system that decides whether it needs weather data, retrieves it, combines it with your calendar, notices you are leaving soon, and warns you to take an umbrella is closer. And a system that can do this consistently, with memory, boundaries, and the ability to decide when to ask versus when to act, is closer still.

That is not just more capability. It is a different operating model.

Not everything with an LLM in it is an agent

This is where most discussions get sloppy.

Let’s separate a few things that are constantly blended together.

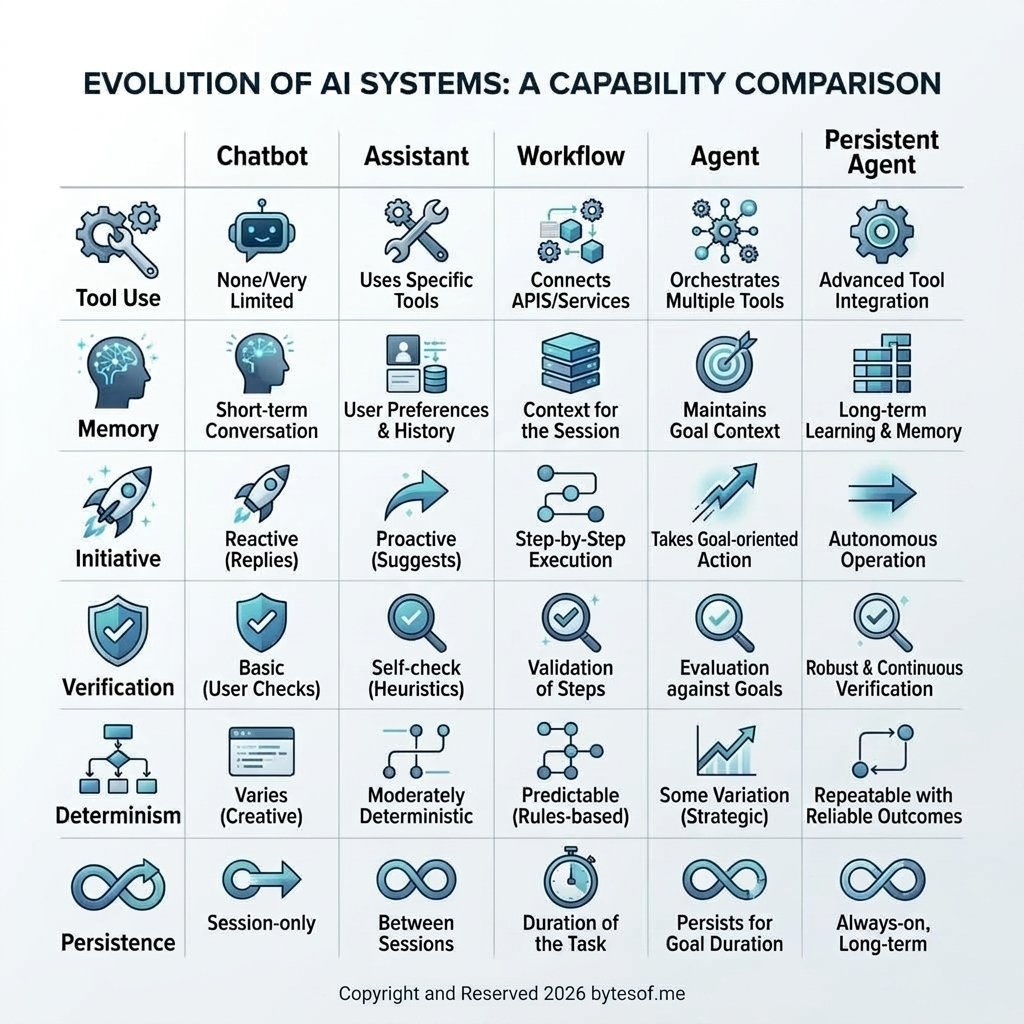

1. Chatbot

A chatbot is mostly conversational. You ask, it answers. Even if the answers are good, its posture is reactive.

2. Assistant

An assistant usually has tools. It can search, read files, send messages, update a calendar, maybe run code. It can do more than talk. But many assistants are still highly dependent on the user driving every step.

3. Workflow

A workflow is structured automation. It may include an LLM, but most of the logic is predefined. This is where tools like n8n and many LangFlow graphs live. These systems can be extremely useful, but usefulness is not the same as agency.

4. Agent

An agent, in the stronger sense, can interpret a goal, choose among actions, track state across steps, use tools within boundaries, and move work forward without needing constant prompt-by-prompt steering.

That last category is the one people usually want when they say “agent,” but a lot of systems never really get there.

They are still mostly workflows wearing a cooler name.

Tool calling is not agency

This is probably the most important distinction in the whole discussion.

A lot of demos look impressive because the model can call tools:

- search the web

- open a document

- hit an API

- query a database

- trigger a Slack or Discord message

That is useful. It is often necessary. But it is not enough.

If the model is just responding to a direct instruction with a function call, you do not yet have much agency. You have a model attached to an action surface.

Agency begins to matter when the system can do things like:

- decide what information it needs

- choose among multiple possible actions

- track progress across multiple steps

- recover when something fails

- verify that an action actually worked

- know when to escalate instead of continuing blindly

- preserve state and context in a meaningful way

That is why a lot of “agents” built with LangChain are really structured tool loops, and a lot of systems built in LangFlow are better described as visual workflows. That is not an insult. Some of those systems are exactly what should be built. But not every multi-step LLM system deserves the stronger label.

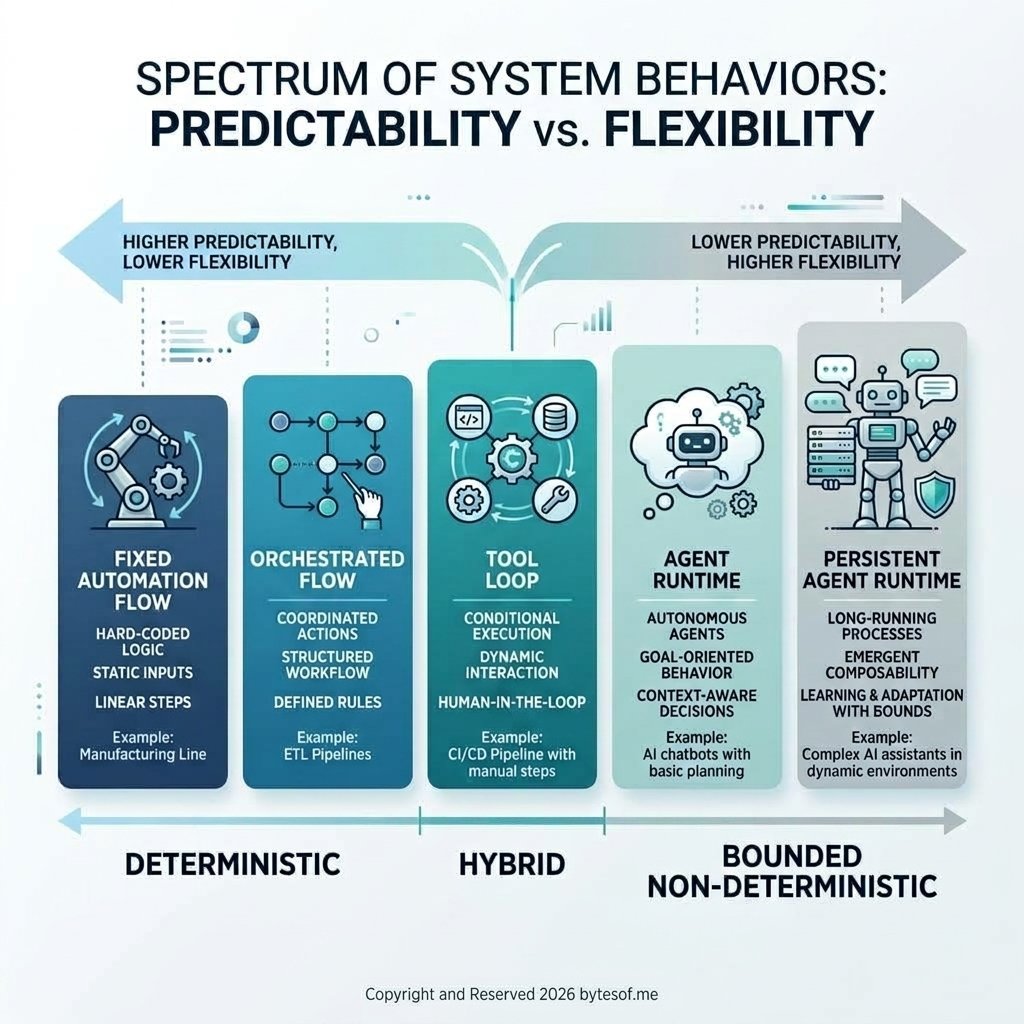

Deterministic systems vs non-deterministic agents

A big part of the confusion comes from mixing up deterministic systems and non-deterministic systems.

A deterministic system is designed around a predetermined path. Given the same input and the same conditions, you expect roughly the same behavior. Traditional workflows work this way. Many automations built in n8n work this way. A lot of LangFlow setups also work this way, even if they contain LLM calls.

A non-deterministic system is different. It may pursue the same goal in different ways. It may choose different tools, different sequences, different intermediate actions, or different recovery paths depending on the context. That is where agent behavior starts to become more meaningful.

A useful way to think about it is this:

Workflows are designed around predetermined paths. Agents are designed around bounded choice.

That does not mean agents are random. Good agent systems are not chaotic. Their non-determinism is constrained by goals, boundaries, permissions, memory, and verification rules. But the system is not simply walking a fixed script. It is making decisions inside a controlled operating space.

This is why many so-called agents are better described as deterministic workflows with LLM decoration. They may use a model, they may call tools, and they may appear dynamic, but the real control flow is still largely scripted.

That is also why persistent systems like OpenClaw are more interesting to me than many one-shot agent demos. They force harder questions:

- what should be deterministic?

- where should bounded non-determinism be allowed?

- what should be verified before action is considered complete?

- when should the system escalate to a human?

- how should memory affect future behavior?

Those are real architectural questions. They matter much more than whether the demo looked clever.

Autonomy is a spectrum, not a badge

People also use the word autonomous too casually.

A system is not autonomous just because it can take three steps in a row without you interrupting it.

That is automation. Sometimes useful automation, sometimes brittle automation, but still automation.

Real autonomy exists on a spectrum.

- a model picks from a small set of tools

- follows a narrow task

- waits for explicit user instruction

In the middle:

- it plans multiple steps

- tracks intermediate state

- retries or adapts

- asks clarifying questions when needed

Further up:

- it maintains continuity across sessions

- has durable memory

- can route work to subagents or other systems

- can act proactively within boundaries

- knows what it is allowed to do and what it must verify

That last layer is where systems like OpenClaw get more interesting than many one-shot demos. Not because persistence automatically makes something better, but because persistence forces you to think about identity, memory, approvals, safety, routing, verification, and long-lived behavior. Those are real operational concerns. They move the conversation past “look, it called a tool” into “what kind of system are we actually building?”

Beginners need clarity. Advanced builders need sharper distinctions.

If you’re new to this, the simple mental model is:

- chatbot = talks

- assistant = talks and does tasks

- workflow = follows a predefined multi-step process

- agent = chooses actions and moves a goal forward within boundaries

- persistent agent = does that with continuity, memory, and a longer-lived operating model

If you’re more advanced, the useful distinctions are slightly different:

- tool use vs decision-making

- scripted flow vs bounded initiative

- deterministic execution vs non-deterministic choice

- statelessness vs continuity

- single-step success vs verified outcome

- ephemeral execution vs persistent operating context

- capability breadth vs control quality

Those distinctions matter more than the branding.

Because in practice, the hard part is not getting a model to “act.” The hard part is getting it to act coherently, safely, and reliably enough that the behavior is useful outside a demo.

The real question is architectural

When someone says they built an AI agent, the word itself tells me very little.

The better questions are:

- What tools can it use?

- What decisions can it make on its own?

- What state does it preserve?

- What boundaries does it operate under?

- What parts are deterministic?

- Where is bounded non-determinism allowed?

- How does it verify outcomes?

- When does it escalate to a human?

- Is it ephemeral, session-based, or persistent?

- Is it actually choosing, or just following a glorified script?

Those questions reveal the architecture. And the architecture matters more than the label.

This is one reason I think a lot of the current market is talking past itself. One team is showing a LangChain tool loop and calling it an agent. Another is building a LangFlow orchestration and calling it an agent. Another is wiring LLM prompts into n8n and calling it an agent. Another is building a persistent runtime like OpenClaw with memory, tools, routing, and subagents and calling it an agent.

They are not wrong to use the term. They are just describing very different things.

The word has become too overloaded to stand on its own.

My working definition

So here is the definition I find most useful:

An AI agent is a non-deterministic, model-driven system that can interpret goals, choose actions within defined boundaries, use tools or external resources to affect the world, and carry state across multiple steps in a way that meaningfully reduces the need for constant user micromanagement.

That definition is intentionally stricter than “LLM with tool calling,” but looser than “fully autonomous machine employee.”

It leaves room for different kinds of agent systems:

- lightweight task agents

- planner/executor setups

- orchestrated multi-agent systems

- persistent personal agents

- enterprise agents with approvals, memory, and governance layers

What matters is not whether the UI feels magical. What matters is whether the system is actually doing agentic work.

Why this distinction matters

This is not just semantics.

If we are loose with the term, we design loosely too.

- verification

- permission models

- memory design

- escalation logic

- deterministic vs non-deterministic boundaries

- failure handling

- persistence

- identity

- control

We also start overclaiming what our systems can do. A lot of current “agent” discourse quietly assumes competence that the architecture has not actually earned.

That is how people end up shipping brittle systems, calling them autonomous, and then acting surprised when they fail in predictable ways.

The more useful approach is boring but better:

- describe the system honestly

- name the architecture clearly

- distinguish workflow from agency

- distinguish automation from autonomy

- distinguish deterministic control from bounded non-determinism

- distinguish tool access from judgment

Once you do that, the term agent becomes useful again.

Final thought

I do think AI agents are real. I also think the term is currently being used too generously.

Not every chatbot with tools is an agent.

Not every workflow with an LLM is an agent.

Not every LangChain app is an agent.

Not every LangFlow graph is an agent.

Not every automation deserves the autonomy label.

But some systems really are agentic, and the difference is not vibe. It is architecture.

If we want to build serious agent systems, we should stop treating “agent” as a marketing upgrade and start treating it as a design claim that has to be earned.

Comments